- Ready-to-use descriptors that wrap a specific model,

- A general interface to call other suitable models you select.

- You know how to use descriptors to evaluate text data.

Imports

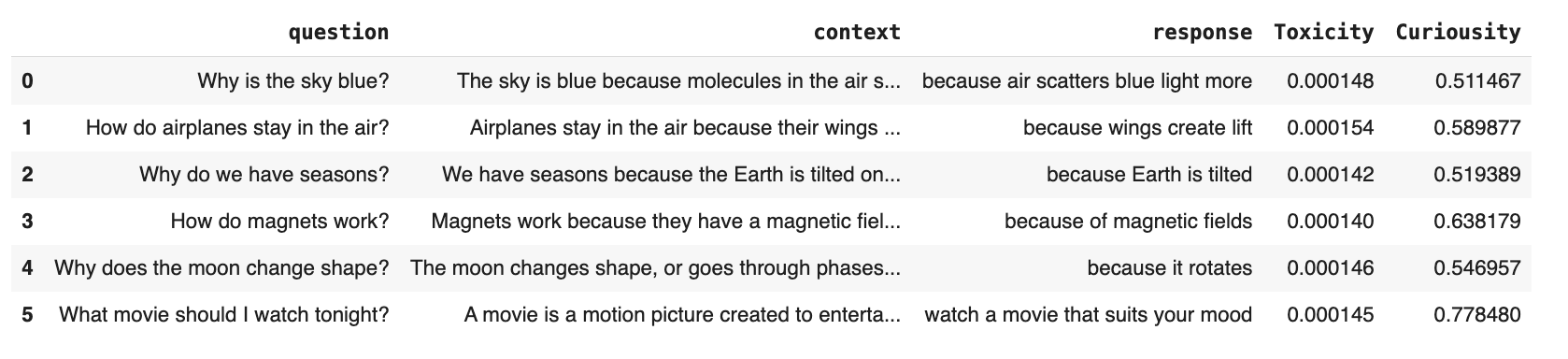

Toy data to run the example

Toy data to run the example

To generate toy data and create a Dataset object:

Built-in ML evals

There are built-in evaluators for some models. You can call them like any other descriptor:Custom ML evals

You can also add any custom checks directly as a Python function.

HuggingFace() descriptor to call a specific named model. The model you use must return a numerical score or a category for each text in a column.

For example, to evaluate “curiousity” expressed in a text:

Sample models

Here are some models you can call using theHuggingFace() descriptor.

| Model | Example use | Parameters |

|---|---|---|

Emotion classification

| HuggingFace("response", model="SamLowe/roberta-base-go_emotions", params={"label": "disappointment"}, alias="disappointment") | Required:

|

Zero-shot classification

| HuggingFace("response", model="MoritzLaurer/DeBERTa-v3-large-mnli-fever-anli-ling-wanli", params={"labels": ["science", "physics"], "threshold":0.5}, alias="Topic") | Required:

|

GPT-2 text detection

| HuggingFace("response", model="openai-community/roberta-base-openai-detector", params={"score_threshold": 0.7}, alias="fake") | Optional:

|

- Output a single number (e.g., predicted score for a label) or a label, not an array of values.

- Can process raw text input directly.

-

Name labels using

labelorlabelsfields. -

Use methods named

predictorpredict_probafor scoring.